Audetic: Building the Platform Slowly on Purpose

Audetic is not a startup sprint. It is not a VC-backed race to ship features as fast as possible.

It is a long-term side project I have been building deliberately, informed by years of watching products fail for avoidable reasons. Instead of chasing speed, I am focused on building a platform that is scalable, secure, and resilient enough to grow without needing a rewrite every year.

That choice shapes everything about how Audetic is built.

In this post, I want to share an early look at the architecture and CI/CD pipeline behind Audetic, and why I made the decisions I did. The asterisk here is, as things evolve they will be subject to change... & that's ok!

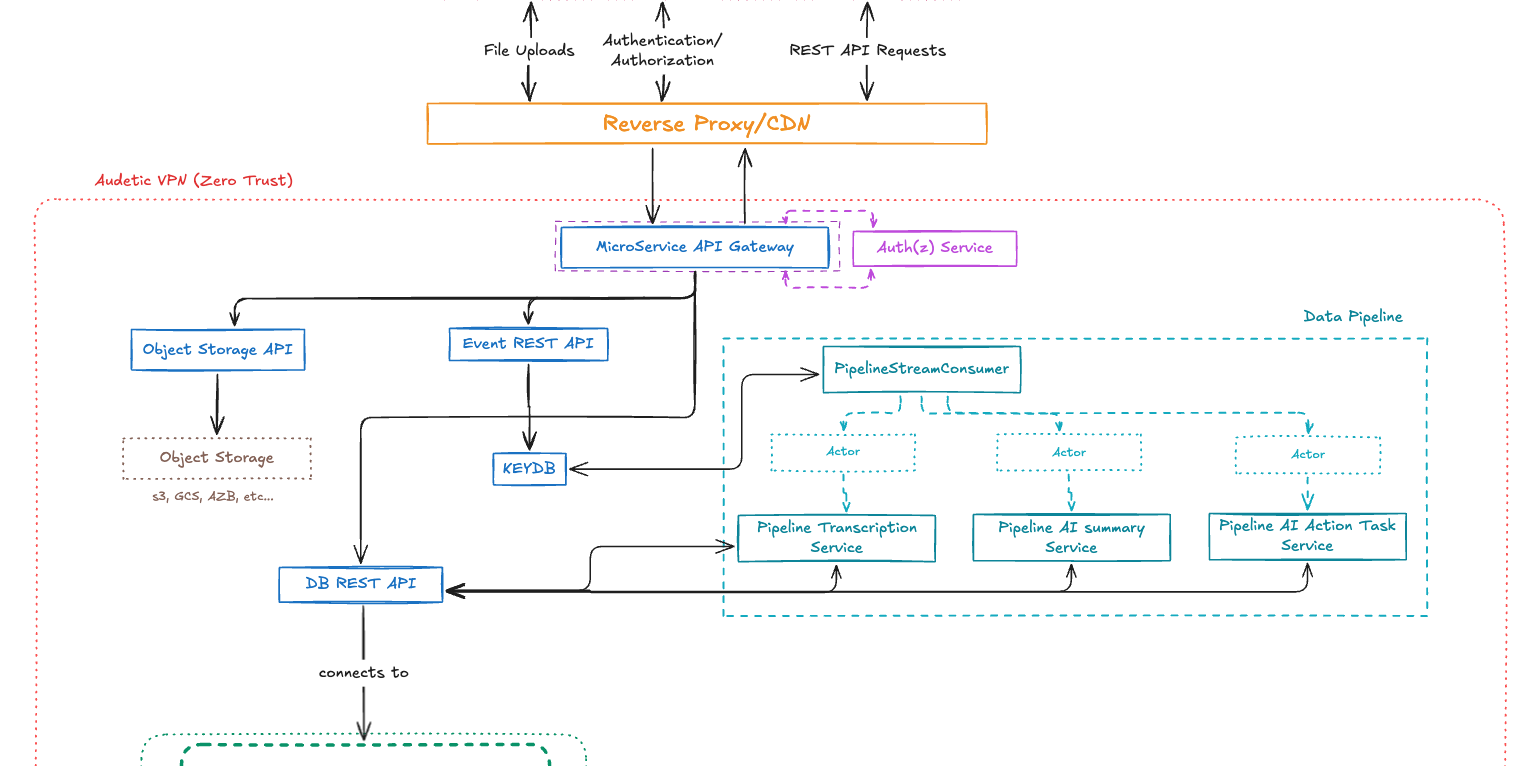

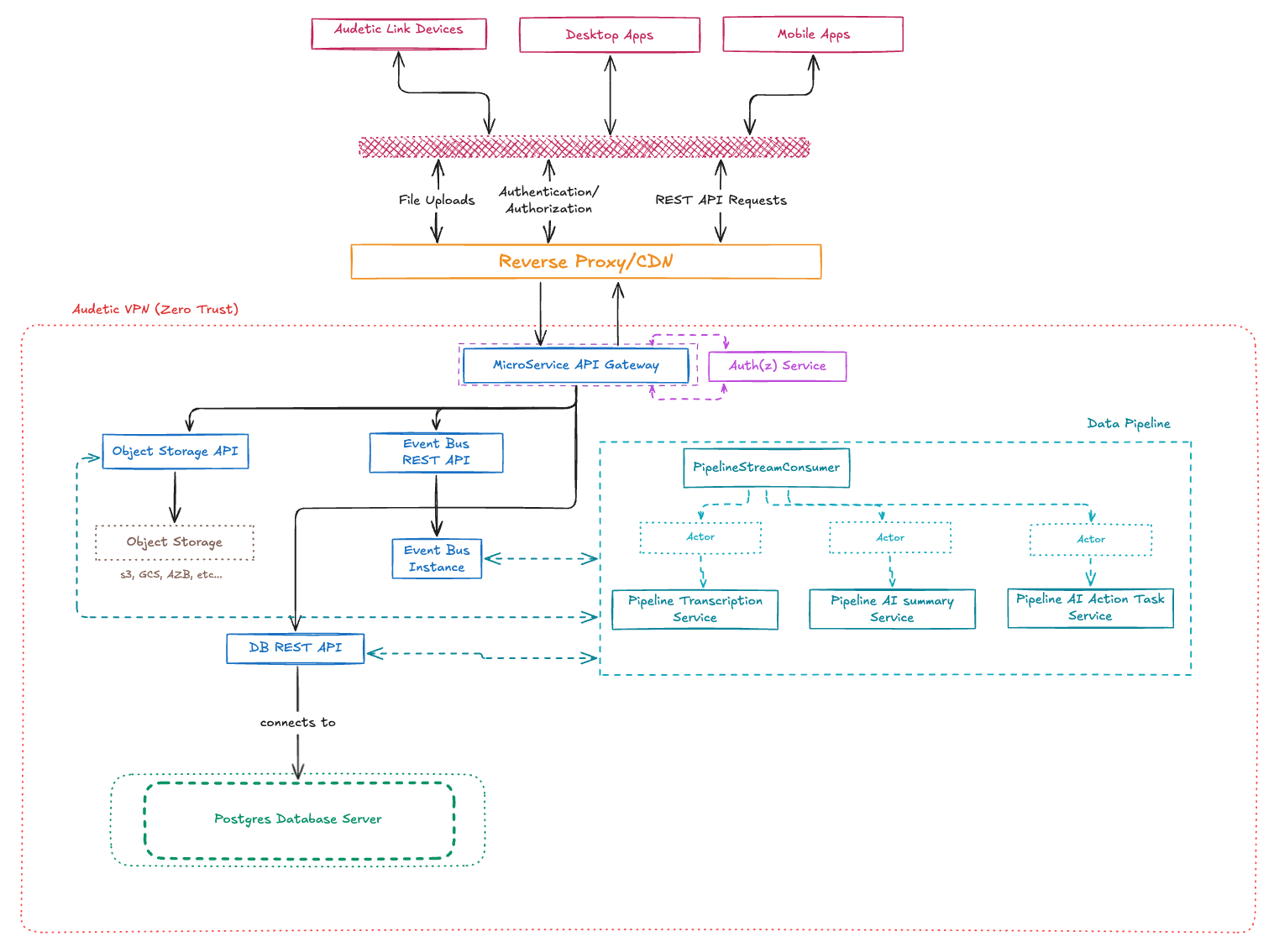

A Modular, Event-Driven Architecture

Audetic is designed as a collection of loosely coupled services, each responsible for a narrow slice of functionality. Audio ingestion, transcription, enrichment, and AI-driven analysis/workflows are all handled independently.

This structure is intentional.

Each service can evolve, be replaced, or be scaled without dragging the rest of the system along with it. I am optimizing for change, not for premature optimization.

These services can also be re-used for other apps/platforms I am building/want to build that need the feature sets provided by these services.

At the center of the system is an event-driven pipeline. I currently use a lightweight KeyDB instance as an event bus to coordinate near real-time communication between services. It gives me flexibility without unnecessary operational overhead at this stage. If and when the system needs heavier streaming infrastructure, it can be swapped for Kafka or something similar with minimal rework to the core logic.

All external access flows through a central API gateway. It handles authentication, rate limiting, logging, and audit trails inside a tightly controlled, VPN-protected environment. This gives me a single, enforceable boundary around the system. This gateway can also be repurposed for other projects, its just another DNS entry away.

Lightweight Clients, Heavy Processing Where It Belongs

Audetic’s clients are intentionally thin.

The UI is focused on interaction and visualization, not computation. Anything expensive or complex happens elsewhere.

Processing-heavy workloads run in specialized command-line tools and backend services written in Swift, Scala, and Python. This keeps the client responsive and allows me to scale compute independently of user-facing interfaces.

It also lets me experiment freely. I can prototype new processing stages or AI workflows without touching the UI at all.

A Data Pipeline Built for Extension

Most of Audetic’s real value lives in its data pipeline.

The pipeline is built in Scala and leans heavily on functional patterns to keep transformations explicit and predictable. It handles transcription, summarization, enrichment, and downstream AI-driven analysis with minimal overhead.

Jobs are actor-based and triggered by event consumers. The flow is deliberately acyclic. Data moves forward through clearly defined stages. That makes the system easier to reason about and easier to extend when new use cases emerge.

Several pieces of this pipeline are backed by open source projects I have been building alongside Audetic, including an object storage service and a Scala library for JSON Schema handling, OpenAPI generation, and AI agent orchestration. Those projects exist independently, but Audetic is where they are put to use.

Security Is a Baseline, Not a Feature

Security is not something I plan to “add later.”

Tenant isolation is enforced at the database level using Postgres row-level security. Internal services communicate over private networks. All public APIs are protected with TLS and strict authentication.

The system follows a zero-trust model. Every request is verified. Data is encrypted in transit and at rest using AES-256. Customer files live on encrypted storage volumes by default.

None of this is exotic. It is simply easier to do correctly early than to retrofit once real data and real users are involved.

Containerized CI/CD

Every service is containerized. Docker images are built and deployed using GitHub Actions. Testing, builds, and deployments are automated end to end.

Because everything is containerized, I am not tied to a single provider. Audetic can run on DigitalOcean, AWS, or on-prem infrastructure with minimal changes. That flexibility matters long-term, especially for a project that is meant to evolve over years, not quarters.

For observability, I use OpenTelemetry paired with ClickHouse via HyperDX. Logs, traces, and metrics are collected consistently across services, which makes debugging and performance tuning far less painful than it needs to be.

Architecture as Leverage

I am not building Audetic this way because it's the latest tech trend(it's not).

I am building it this way because I have seen what happens when architecture is treated as optional. Shortcuts compound. Teams slow down. Rewrites become inevitable.

By designing for multi-tenancy, security, and service boundaries from the start, I have avoided entire classes of problems that usually show up later and cost far more to fix.

This is not about perfection. It is about optionality.

Looking Ahead

Audetic is still early.

The architecture is intentionally capable of supporting things I have not built yet. More advanced multi-tenancy. Collaboration features. Compliance requirements. Higher throughput...

I plan to continue evolving both the platform and the open source components that support it. This post is just a snapshot. The real work is ongoing.